Understanding when to use a robots.txt file can transform how search engines interact with your website. It is not just a technical file, it is a strategic SEO tool that guides crawlers toward valuable content. When used correctly, it helps you manage crawl budgets, protect sensitive pages, and improve indexing efficiency.

Many website owners either ignore robots.txt or misuse it, which can lead to serious SEO issues. This guide will help you understand exactly when you should use a robots.txt file and how to apply it effectively. By the end, you will know how to make smarter decisions that improve your visibility in search results.

What Is A Robots.txt File And Why It Matters

A robots.txt file is a simple text file that tells search engine crawlers which pages or sections of your website they can access. It sits in your root directory and acts as the first point of interaction between your site and bots. When properly configured, it helps search engines prioritize the right content and avoid wasting resources.

You should think of robots.txt as a traffic controller for your website, directing bots where to go and where to stay away. This becomes especially important for large websites with thousands of pages, where crawl efficiency directly impacts indexing speed. In fact, improving crawl management can increase indexing rates by over 30 percent on content-heavy websites.

When monitoring how well your pages are being discovered, you can also use tools that help you check all your indexed pages at once while identifying gaps in your crawl strategy. This allows you to refine your robots.txt rules based on real indexing data instead of guesswork.

When You Should Use Robots.txt To Control Crawl Budget

You should use a robots.txt file when your website has a limited crawl budget and you want search engines to focus on your most valuable pages. Crawl budget refers to the number of pages a search engine bot will crawl on your site within a given timeframe. If bots waste time on irrelevant pages, your important content may not get indexed quickly.

For example, websites with filters, search parameters, or duplicate pages can confuse crawlers and dilute crawl efficiency. Blocking these unnecessary URLs ensures bots spend more time on pages that actually matter for ranking. This is particularly useful for eCommerce stores, blogs, and large directories.

Using robots.txt in this way helps you guide search engines toward high-priority content while reducing server load. It also ensures that newly published pages are discovered faster, which is critical for time-sensitive content and SEO growth.

When You Should Use Robots.txt To Block Non Essential Content

Another important use of robots.txt is blocking content that does not need to appear in search results. This includes admin pages, login areas, staging environments, and duplicate content paths. By restricting access to these areas, you maintain a cleaner and more focused index.

For instance, pages like /wp-admin/, /cart/, or /checkout/ do not provide value to search users and should not be crawled. Allowing bots to access them wastes the crawl budget and may expose unnecessary technical paths. Blocking them keeps your site structure efficient and secure.

Understanding how content is generated and structured can help you make better blocking decisions, especially when reviewing strategies like how content generators work how to use them effectively to avoid indexing low-value pages. This ensures your robots.txt file aligns with your overall content strategy.

When You Should Use Robots.txt For Large Scale Websites

Large-scale websites benefit the most from a well-optimized robots.txt file. When you have thousands or even millions of pages, it becomes impossible for search engines to crawl everything efficiently. This is where robots.txt becomes essential for prioritization.

By blocking low-value sections such as tag archives, duplicate categories, or outdated content, you improve crawl efficiency significantly. This helps search engines focus on your core pages, which increases your chances of ranking higher in search results.

Additionally, large websites often deal with frequent updates, and managing crawl flow ensures that new pages are indexed quickly. This is especially useful for news sites, marketplaces, and content platforms that rely on fresh content visibility.

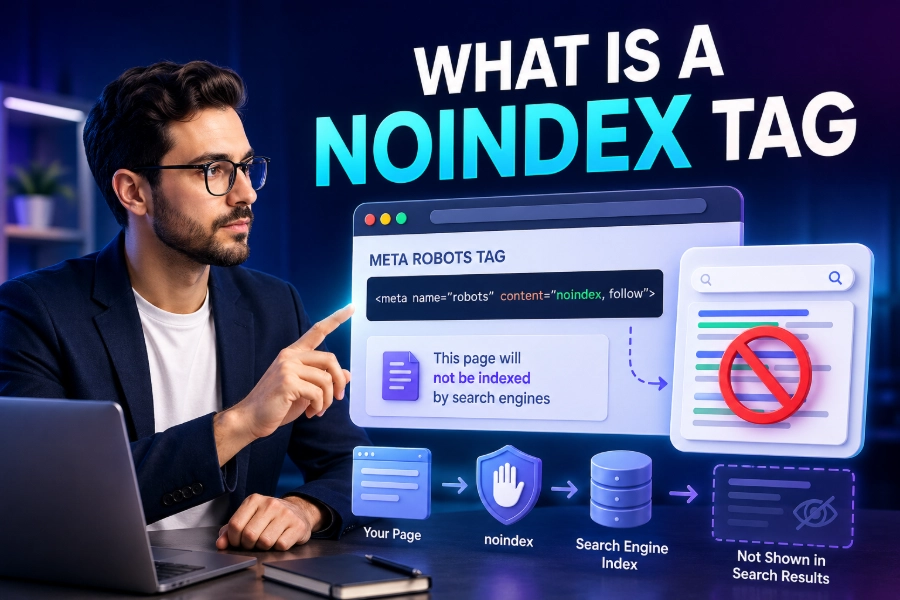

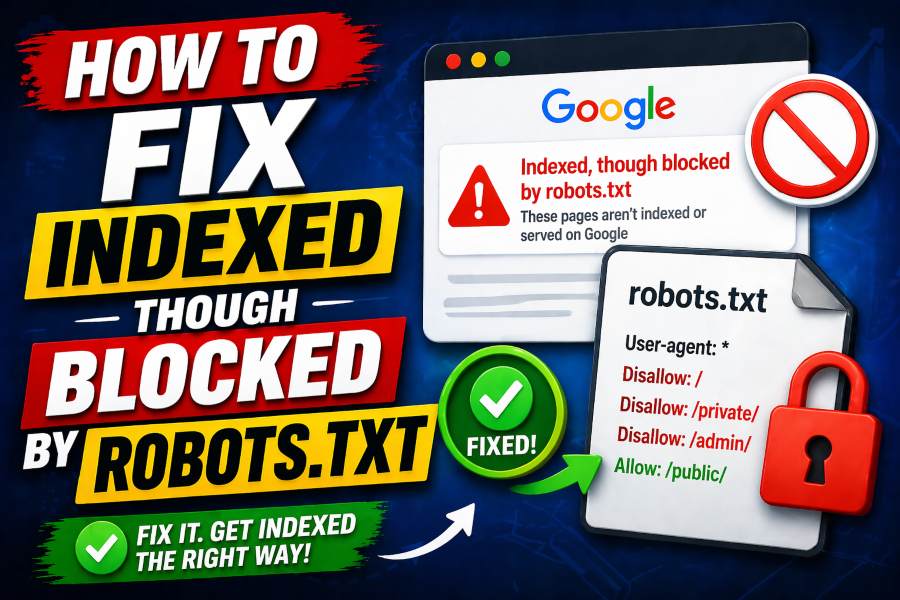

When You Should Not Use Robots.txt For Indexing Control

One common mistake is using robots.txt to prevent pages from appearing in search results. This approach is flawed because robots.txt only blocks crawling, not indexing. If a blocked page has backlinks, it may still appear in search results without content.

Instead, you should use meta tags like noindex or proper canonicalization for indexing control. Robots.txt should only be used for crawl management, not for hiding content from search engines. Misusing it can lead to unintended SEO issues and loss of visibility.

Understanding this distinction helps you avoid critical errors and ensures your SEO strategy remains effective. It also allows you to combine robots.txt with other tools for better control over your site’s performance.

When You Should Use Robots.txt To Protect Server Resources

High-traffic websites often experience heavy crawling activity, which can strain server resources. Using robots.txt to limit bot access can reduce unnecessary load and improve overall site performance. This is especially useful during peak traffic periods or major updates.

By restricting bots from accessing resource-heavy sections such as scripts or large media directories, you improve loading speed and user experience. Faster websites tend to rank better and retain visitors longer.

This approach also ensures that search engines crawl your site efficiently without overwhelming your infrastructure. It creates a balance between accessibility and performance optimization.

When You Should Use Robots.txt For Duplicate Content Management

Duplicate content is a common issue that can confuse search engines and dilute ranking signals. Robots.txt can help you prevent bots from crawling duplicate pages, especially those generated by filters, parameters, or session IDs.

Blocking these duplicates ensures that search engines focus on the original and most relevant version of your content. This strengthens your SEO signals and improves your chances of ranking higher.

However, you should combine robots.txt with canonical tags for maximum effectiveness. This ensures that both crawling and indexing are properly managed without conflicts.

When You Should Use Robots.txt For Staging And Development Sites

If you are working on a staging or development version of your website, robots.txt becomes critical. It prevents search engines from crawling unfinished or duplicate versions of your site. Without proper restrictions, these pages may accidentally appear in search results.

Blocking staging environments ensures that only your live website is indexed. This protects your SEO performance and prevents confusion for users and search engines.

It also helps maintain a clean and professional online presence while you continue to develop and test new features behind the scenes.

When You Should Use Robots.txt To Guide Specific Crawlers

Not all bots behave the same way, and robots.txt allows you to create rules for specific user agents. This means you can control how different search engines interact with your website. For example, you might allow Googlebot full access while restricting less important crawlers.

This level of control helps you prioritize high-value search engines while limiting unnecessary bot activity. It also allows you to experiment with different crawling strategies to improve performance.

Advanced users can use this feature to fine-tune their SEO approach and gain better control over how their content is discovered.

When You Should Use Robots.txt Alongside Sitemap Optimization

Including your sitemap in robots.txt is a smart way to guide search engines toward your most important pages. It acts as a roadmap that complements your crawl instructions and improves indexing efficiency.

Search engines rely on sitemaps to discover new and updated content quickly. By linking your sitemap in robots.txt, you create a seamless experience for crawlers.

This combination ensures that your most valuable pages are prioritized and indexed faster, which directly impacts your search visibility.

When You Should Use Robots.txt For Content Strategy Alignment

Your robots.txt file should reflect your overall content strategy. If you are producing large volumes of content, you need to ensure that only high-quality pages are accessible to crawlers. This prevents low-value content from affecting your SEO performance.

For example, understanding tools like what is an ai writing assistant and benefits of using one can help you create better content while ensuring only optimized pages are indexed. This aligns your technical SEO with your content goals.

By maintaining this alignment, you create a strong foundation for sustainable SEO growth and improved rankings.

When You Should Regularly Update Your Robots.txt File

Your robots.txt file should not remain static as your website evolves. As you add new sections, remove outdated pages, or change your structure, you need to update your crawl rules accordingly.

Regular audits help you identify areas where crawl efficiency can be improved. This ensures that search engines always have accurate instructions for navigating your site.

Keeping your robots.txt file updated is a simple yet powerful way to maintain strong SEO performance over time.

Conclusion

Knowing when you should use a robots.txt file gives you a powerful advantage in managing your website’s SEO performance. It allows you to control crawl behavior, prioritize valuable content, and protect your site from unnecessary indexing issues. When used strategically, it becomes an essential part of your technical SEO toolkit.

You should use robots.txt when you want to guide search engines, not restrict them blindly. Focus on improving crawl efficiency, protecting sensitive areas, and aligning your file with your content strategy. With consistent updates and smart implementation, you can ensure your website remains optimized, discoverable, and competitive in search results.